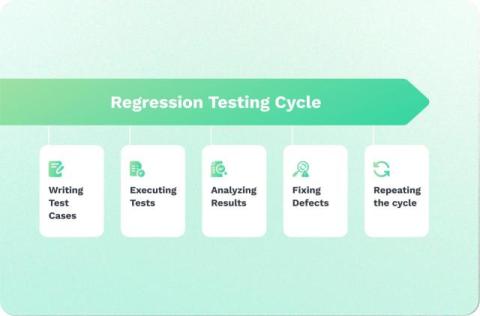

What is Regression Testing? Definition, types, and tools

Regression testing is a software testing process that ensures your existing features, designs, and dependencies continue to work as expected after changes or updates are made to your codebase. It detects unintended bugs or breaks introduced by modifications like new features, bug fixes, or configuration changes. Each new change introduces a risk of breaking existing functionality, potentially causing shipping delays or launch postponements.