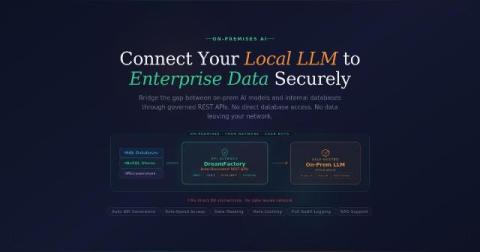

Connecting On-Premises LLMs to Enterprise Databases and APIs | DreamFactory

As organizations increasingly recognize the value of generative artificial intelligence, many are moving away from cloud hosted models in favor of on premises Large Language Models. This shift is primarily driven by the need to protect sensitive corporate data, maintain regulatory compliance, and reduce latency. However, an isolated local model offers limited utility. To truly unlock the potential of an on premises LLM, enterprises must connect it to their internal databases and APIs.