Systems | Development | Analytics | API | Testing

MLOps World Toronto: MLOps Beyond Training Simplifying and Automating the Operational Pipeline

Performance considerations for loading data into BigQuery

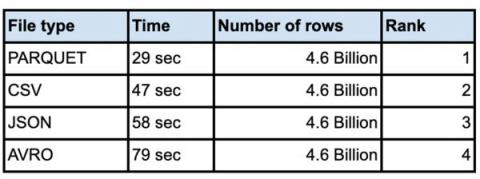

Performance considerations for loading data into BigQuery for various file types.

People Were Skeptical of Data Warehouses. Now History Is Repeating.

Performance considerations for loading data into BigQuery

It is not unusual for customers to load very large data sets into their enterprise data warehouse. Whether you are doing an initial data ingestion with hundreds of TB of data or incrementally loading from your systems of record, performance of bulk inserts is key to quicker insights from the data. The most common architecture for batch data loads uses Google Cloud Storage(Object storage) as the staging area for all bulk loads.

8 Essential Ecommerce Google Analytics Dashboards Recommended by Ecommerce Experts

Accelerating IT to the Speed of Business

Businesses today adapt to change with breathtaking speed. Lines of business now follow their products out the door digitally and continue tracking usage throughout the lifecycle. This gives companies valuable insights into how customers use and interact with their products, enabling them to analyze and evolve solutions while nurturing customer relationships.

Taking charge of your health through data - Unmesh Srivastava

How to stop failing at data

Innovate or die. It’s one of the few universal rules of business, and it’s one of the main reasons we continue to invest so heavily in data. Only through data can we get the key insights we need to innovate faster, smarter, better and keep ahead of the market. And yet, the vast majority of data initiatives are doomed to fail. Nearly nine out of 10 data science projects never make it to production.

Competitive research with an edge: scrape any webpage with Talend

We’re back with another Job of the Week! The title is admittedly growing on me... This week’s job takes us into the world of data scraping. First, let’s answer two common FAQ’s.