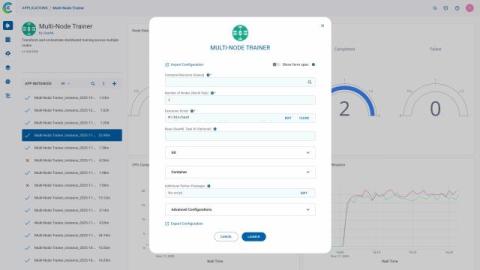

Multi-Node Training with ClearML

Orchestrating distributed AI workloads Distributed (multi-node) training has become a requirement rather than an optimization for many modern AI workloads. As model sizes grow, datasets expand, and training timelines tighten, teams increasingly rely on multiple machines, often with multiple GPUs each, to complete training efficiently.