Scalable AI Economics: Achieving Secure, Hybrid Intelligence with Cloudera, AMD, and Dell Technologies

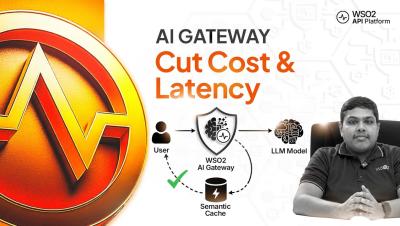

Enterprise interest in generative and agentic AI has accelerated dramatically over the past two years. Organizations across industries are exploring how AI agents, intelligent assistants, and automation can improve productivity, streamline operations, and unlock insights from growing volumes of enterprise data. Yet as enthusiasm grows, so do questions around cost, security, and operational complexity.