Systems | Development | Analytics | API | Testing

What is Self Service Analytics? The Role of Accessible BI Explained

AI at Scale isn't Magic, it's Data - Hybrid Data

A recent VentureBeat article , “4 AI trends: It’s all about scale in 2022 (so far),” highlighted the importance of scalability. I recommend you read the entire piece, but to me the key takeaway – AI at scale isn’t magic, it’s data – is reminiscent of the 1992 presidential election, when political consultant James Carville succinctly summarized the key to winning – “it’s the economy”.

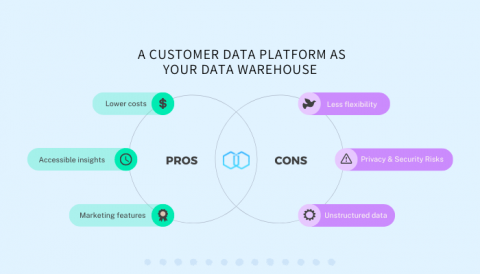

Pros & Cons of Using a Customer Data Platform as Your Data Warehouse

Using Advanced Functions in a report

Enterprise data warehouses: Definition and guide

An enterprise data warehouse is critical to the long-term viability of your business.

How Twitter maximizes performance with BigQuery

How to Accelerate HuggingFace Throughput by 193%

Deploying models is becoming easier every day, especially thanks to excellent tutorials like Transformers-Deploy. It talks about how to convert and optimize a Huggingface model and deploy it on the Nvidia Triton inference engine. Nvidia Triton is an exceptionally fast and solid tool and should be very high on the list when searching for ways to deploy a model. Our developers know this, of course, so ClearML Serving uses Nvidia Triton on the backend if a model needs GPU acceleration.

Live: Educational Services for Snowflake Data Governance

Unravel Automated Data Quality Datasheet

Unravel now pulls in data quality checks from external tools into its single-pane-of-glass full-stack observability view.