Systems | Development | Analytics | API | Testing

Commerzbank | Unleashing Hidden Data Treasures for Customers

Building and Managing the Modern Datastore: The Data Lakehouse

MongoDB vs. PostgreSQL: Detailed Comparison of Database Structures

Unstructured Data Now Generally Available in Snowflake, Processing with Snowpark in Public Preview

We’re excited to announce the general availability of the unstructured data management functionality in Snowflake. We launched public preview of this functionality in September 2021, and since then we have seen adoption by customers across industries for a variety of use cases. These use cases include storing and securing call center recordings, securely sharing PDF documents in Snowflake Data Marketplace, storing medical images and extracting data from them, and many more.

Helping I.T. take flight - Mark Settle

Why You Need a Fully Automated Data Pipeline

Business Intelligence on the Cloud Data Platform: Approaches to Schemas

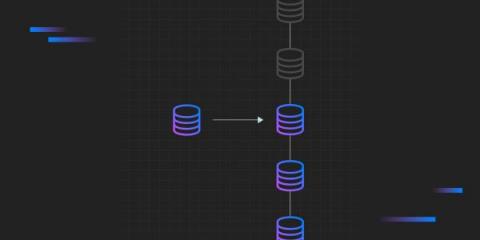

What is data replication and why is it important?

Your company needs data replication for analytics, better performance and disaster recovery.

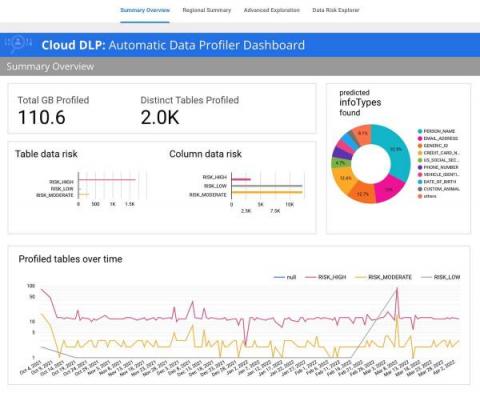

Automatic data risk management for BigQuery using DLP

Protecting sensitive data and preventing unintended data exposure is critical for businesses. However, many organizations lack the tools to stay on top of where sensitive data resides across their enterprise. It’s particularly concerning when sensitive data shows up in unexpected places – for example, in logs that services generate, when customers inadvertently send it in a customer support chat, or when managing unstructured analytical workloads.