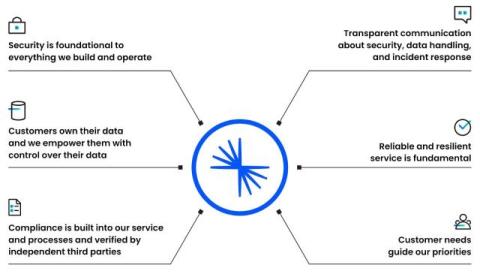

Empowering Customers: The Role of Confluent's Trust Center

The foundation of every successful customer relationship is trust. At Confluent, we understand that for our customers and prospects to innovate with confidence, they must have complete trust in the security and integrity of our platform. Our commitment goes beyond simply providing a secure product. It’s about empowering our customers with the tools and transparency they need to feel confident in their data streaming architectures.