ClearML Enterprise v3.28: Usage Metering, Policy Enhancements, and Smarter Admin Controls

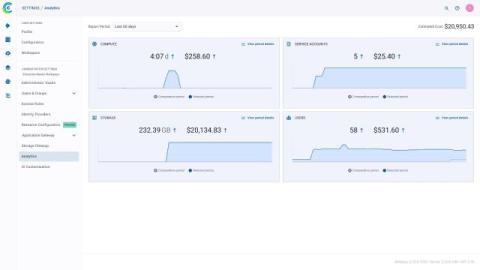

Author: Adam Wolf ClearML Enterprise v3.28 offers new features and improvements to help administrators monitor usage, enforce policies, and streamline operations across large, multi-team environments. This release introduces enhanced usage metering with a simplified interface, improved resource policy management, improved dataset controls, and UI enhancements to provide greater clarity, control, and productivity for AI teams.