Systems | Development | Analytics | API | Testing

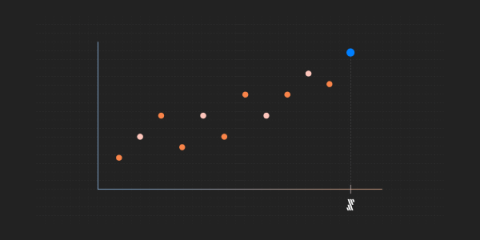

Fivetran named in 2022 Gartner Magic Quadrant and Market Share Analysis reports

For the third year in a row, we were recognized for our "ability to execute" and "completeness of vision.".

Is Deno better than Node.js?

The Deno runtime for JavaScript and TypeScript is created on Rust and the V8 JavaScript engine, equivalent to Node.js. Developed by Ryan Dahl, Node.js' original creator, it is designed to correct errors he made when he first envisioned and unleashed Node.js in 2009. To recapitulate, he was dissatisfied with the lack of security, the lack of module resolution through node_modules, and the differing behaviour of the browser, among other things, which provoked him to implement Deno.

Why you should use caching for your Codemagic Unity projects

TL;DR: Caching the Library folder can significantly speed up your Unity builds in CI/CD environments. Codemagic CI/CD also allows you to cache the Unity version of your project so that you don’t need to re-download it each time. When you work on complex Unity projects and start adding more and more resources, you’ll quickly notice that the build times in your Codemagic workflow grow as well.

Developer inspiration: live features showcase with Ably examples

To ensure a great user experience, whether that’s attending a live virtual event, chatting with friends or collaborating on a document, you need to ensure that the core realtime works in any situation and that you provide a feature-rich application. Disruption, downtime or lags result in poor realtime user experiences.

New Practices in Data Governance and Data Fabric for Telecommunications

The management of data assets in multiple clouds is introducing new data governance requirements, and it is both useful and instructive to have a view from the TM Forum to help navigate the changes.

Top 10 must-read books for data and analytics leaders in 2022

It’s that time of year - back to school, back to books, and our annual must-read books for data and analytics leaders. Given the pace of change in our industry, continuous learning is a must, whether through networking, podcasting, or reading. To cull this year’s list, I focused mainly on books published in the last two years with the themes of data, analytics and AI. I scoured lists and reviews on Amazon, solicited ideas from social networks and got to reading.

Appian as the Agility Layer for your ERP

In government agencies around the world, large enterprise legacy systems are what stand in the way of desperately needed modernization programs. Such legacy systems can be large enterprise resource planning (ERP) implementations or custom-built applications using complex codebases. Simply put, they are not supportable, upgradable, and do not provide the rich user experience customers have become accustomed to.

Why You Need Data Governance for Analytics

How can you ensure data quality and security across your data analytics pipeline? With data governance – the exercising of authority and control over your data assets. It includes tracking, maintaining and protecting data at every stage of the lifecycle.