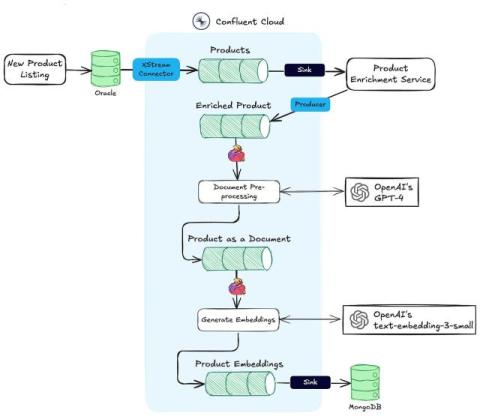

From Oracle to MongoDB: How to Modernize Your Tech Stack for Real-Time AI Decisioning

Playlists for every mood and occasion. Media recommendations grouped by the most niche theme from your watch history. Sophisticated ad algorithms that optimize pay-per-click ads for the customer experience. Whether you call them digital-native, disruptors, or just tech giants, the likes of Spotify, Netflix, and Amazon have long made uncannily personal experiences a key part of their differentiation or business models.