How to Best Format Your Google Sheets for Databox Syncing

Agility in action: introducing Talend Change Data Capture (CDC)

Data volumes are increasing exponentially with no sign of slowing. Experts predict that by 2025, the global volume of data will reach 181 zettabytes — that’s more than four times pre-COVID levels in 2019. Data analysts at Centogene agree: “Every mouse click, keyboard button press, swipe or tap is used to shape business decisions. Everything is about data these days - data is information, and information is power.”

Does Financial Crime Increase During a Recession?

The dynamic and interconnected world of global ecommerce, crypto currencies, and alternative payments places increased pressure on anti-financial crime measures to keep pace and transform alongside these initiatives. Consumers worldwide are projected to use mobile devices to make more than 30.7 billion ecommerce transactions by 2026, a five-fold increase over the 6.1 billion predicted for 2022.

What's new in ThoughtSpot Analytics Cloud 8.5.0

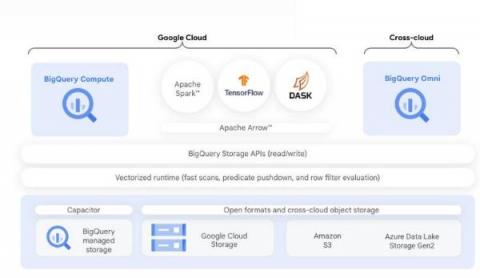

Scalable Python on BigQuery using Dask and NVIDIA GPUs

BigQuery is Google Cloud’s fully managed serverless data platform that supports querying using ANSI SQL. BigQuery also has a data lake storage engine that unifies SQL queries with other open source processing frameworks such as Apache Spark, Tensorflow, and Dask. BigQuery storage provides an API layer for OSS engines to process data. This API enables mixing and matching programming in languages like Python with structured SQL in the same data platform.

[MLOPS] From experiment management to model serving and back. A complete usecase, step-by-step!

Data challenges: From mainframes to the modern data stack

The sheer quantity and diversity of data sources make today’s landscape strikingly different — which requires a new set of tools.

Most Valuable Excel KPI Dashboards for Any Business (Sourced from Experts)

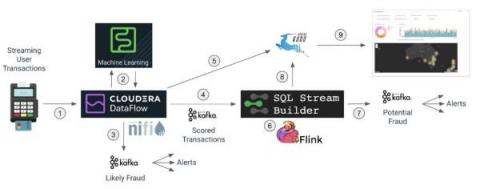

Fraud Detection With Cloudera Stream Processing Part 2: Real-Time Streaming Analytics

In part 1 of this blog we discussed how Cloudera DataFlow for the Public Cloud (CDF-PC), the universal data distribution service powered by Apache NiFi, can make it easy to acquire data from wherever it originates and move it efficiently to make it available to other applications in a streaming fashion.