What Is a Data Pipeline and Why Your Ecommerce Business Needs One

Kafka best practices: Monitoring and optimizing the performance of Kafka applications

Apache Kafka is an open-source distributed event streaming platform used by thousands of companies for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Administrators, developers, and data engineers who use Kafka clusters struggle to understand what is happening in their Kafka implementations.

MLOps World Toronto: MLOps Beyond Training Simplifying and Automating the Operational Pipeline

Demo - Exploiting a data fabric to drive data literacy and data democratisation

8 Essential Ecommerce Google Analytics Dashboards Recommended by Ecommerce Experts

Performance considerations for loading data into BigQuery

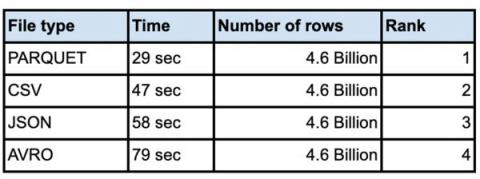

It is not unusual for customers to load very large data sets into their enterprise data warehouse. Whether you are doing an initial data ingestion with hundreds of TB of data or incrementally loading from your systems of record, performance of bulk inserts is key to quicker insights from the data. The most common architecture for batch data loads uses Google Cloud Storage(Object storage) as the staging area for all bulk loads.

People Were Skeptical of Data Warehouses. Now History Is Repeating.

Performance considerations for loading data into BigQuery

Performance considerations for loading data into BigQuery for various file types.