How to Break Off Your First Microservice

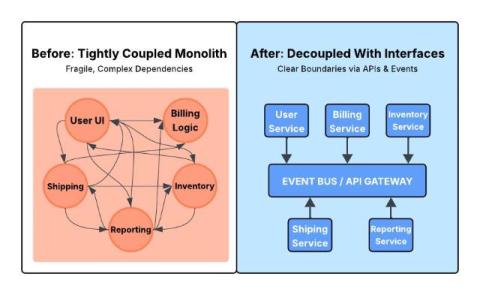

The road from monolithic architecture to cloud-native, microservices application is rarely a straightforward engineering exercise. There's often a significant gap between understanding the theoretical benefits of microservices and successfully extracting each service from a mature, long-running codebase. Many teams exploring microservices migration struggle most with the first extraction. How do you make that initial step concrete, low-risk, and reversible?