Harri: Without Keboola, we would need a team 3x the size to get the same amount of work done.

Fraud Processing With Cloudera Stream Processing

How Ecommerce Data Influences B2B Analytics

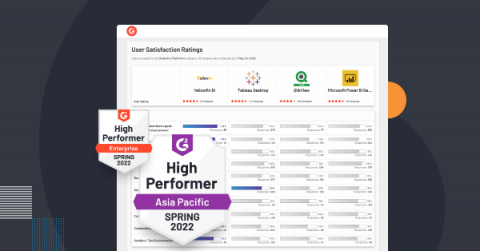

Compare Yellowfin: Top BI and Analytics Platforms with G2 Reports

Build Hybrid Data Pipelines and Enable Universal Connectivity With CDF-PC Inbound Connections

In the second blog of the Universal Data Distribution blog series, we explored how Cloudera DataFlow for the Public Cloud (CDF-PC) can help you implement use cases like data lakehouse and data warehouse ingest, cybersecurity, and log optimization, as well as IoT and streaming data collection. A key requirement for these use cases is the ability to not only actively pull data from source systems but to receive data that is being pushed from various sources to the central distribution service.

Bridging the Productivity Chasm in Operational Transfer Pricing

COVID-19 introduced an unprecedented level of volatility in world markets, and the shockwaves that arrived in its wake exposed a wide chasm between two main types of multinational organizations: Those with agile internal processes and those without. In a world built on complex and globalized supply chains, COVID-19 tested that internal agility, sometimes to breaking point.

Build Your Own Analytics Platform: Advantages and Disadvantages of an Extensible-by-Plugins...

Never before has data become so prevalent in everything we do. Sorting out the best way to make sense of incoming terabytes of data has turned into an extreme sport. Likewise, it has become a life-or-death decision in every organization, regardless of their level of maturity, to determine an analytics strategy to harness the potential power of all that data without running the risk of overwhelming teams and paralyzing processes.

Should You Use Integrate.io for Ecommerce Data Warehouse Integration?

The Future of the Data Lakehouse - Open

Cloudera customers run some of the biggest data lakes on earth. These lakes power mission critical large scale data analytics, business intelligence (BI), and machine learning use cases, including enterprise data warehouses. In recent years, the term “data lakehouse” was coined to describe this architectural pattern of tabular analytics over data in the data lake.