Systems | Development | Analytics | API | Testing

Deploying an LLM ChatBot Augmented with Enterprise Data

The release of ChatGPT pushed the interest in and expectations of Large Language Model based use cases to record heights. Every company is looking to experiment, qualify and eventually release LLM based services to improve their internal operations and to level up their interactions with their users and customers. At Cloudera, we have been working with our customers to help them benefit from this new wave of innovation.

The Art of Data Leadership | A discussion with Synchrony's Head of Provisioning, Ram Karnati

5 Key Ingredients to Accurate Cloud Data Budget Forecasting

Hey there! Have you ever found yourself scratching your head over unpredictable cloud data costs? It’s no secret that accurately forecasting cloud data spend can be a real headache. Fluctuating costs make it challenging to plan and allocate resources effectively, leaving businesses vulnerable to budget overruns and financial challenges. But don’t worry, we’ve got you covered!

Advancing the NodeSource Node.js Package Repo (Including User-Requested Upgrades!)

For over a decade, NodeSource has developed and maintained a Node.js package repository that, has become the standard for production use globally. We are excited to announce some significant updates to this repo that include a large number of items related to user requests.

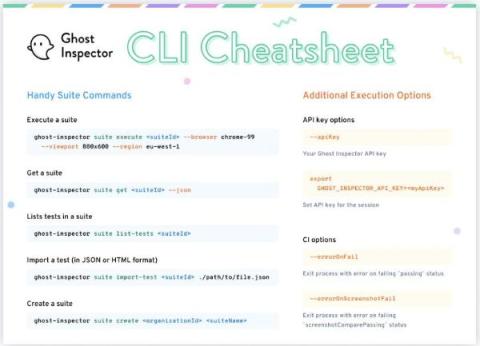

Mastering Test Automation with Command Line Interface + A Free CLI Cheatsheet!

At Ghost Inspector, we are passionate about empowering our users with testing solutions that ensure everything on your website or app works and looks the way it should. Our Command Line Interface (CLI) is one of those solutions, allowing you to simplify the process of scripting interactions with the Ghost Inspector API.

Top 5 API Integration Tools For 2023

API integration allows different software systems to connect with each other and exchange data seamlessly, and this process is literally the backbone of our digital world. While it is entirely possible to perform API integration manually, using dedicated API integration tools offer so many advantages to make this process more efficient, reliable, and manageable.

Empowering Business Intelligence with Yellowfin's Automation Capabilities

New Insights into Federated API Gateways in Gartner Hype Cycle for APIs, 2023

Seeking greater insights into the role of federated API gateways? A good place to start is the recently published Gartner® Hype Cycle for APIs, 2023 , which highlights federated API gateways as a technology at the “emerging” maturity stage with a “high” benefit rating.

Scaling machine learning with BigQuery ML inference engine

BigQuery ML inference engine lets you run inference over custom models, remote models, and pretrained models within your machine learning workflow.